What has actually changed?

The underlying conditions and requirements of design solutions have undergone a dramatic change over recent years: whereas the design process was once aimed at creating brand identities that would make their difference felt over the long term, nowadays rapidly changing services and products must stand out from the competition immediately.

Previously, design rules provided cues for ensuring effective communication. Today, touchpoints are affordable and continuously measurable, making it possible to make verifiable statements about whether a solution has achieved its intended objectives. All this means that the demand for measurable success is growing, in tandem with the increasing relevance of digital channels for business. In addition, new digital touchpoints are constantly appearing, with no figures based on experience (and thus no rules).

As such, designers are facing much shorter development and communication cycles, together with increased demands for efficiency from solutions, with visual design only one component of overall success. After all, the product as a whole must work for the defined target groups, and the design process must be significantly faster. This means that we have to accelerate and systematise our learning processes.

Of course, the user’s perspective was integrated into the design process by means of user-centred design principles, but the problem is that usability tests are often too expensive and time-consuming to be integrated into the design process on an ongoing basis, especially for granular issues.

Conversion rate optimisation has evolved into a discipline of its own, and does at least evaluate and optimise solutions on the basis of a specific key figure. In practice, however, it often happens that this method is not anchored in a holistic way. As a result, it is only used selectively, while designers lack sound input for developing solutions. What’s more, the focus on conversion alone can mask important aspects of a good user experience.

Despite the best of intentions, the reality is that both methods suggest solutions on the basis of assumptions and past experience, and thus fail to really grapple with the actual problem.

As such, the following principles are required as an essential part of our practice:

- Creating targeted types of design to answer specific questions, rather than asking questions based on designs that happen to have been produced before.

- Not making design decisions based on mere assumptions, but rather on predetermined decision metrics.

- Integrating and evaluating user knowledge within our design process more quickly.

Data: solution and challenge?

It is clear that current design processes are not capable of this, but thinking outside the box and connecting up with other skills is vitally necessary. Objectivity, measurability and efficient analysis mapping are already being demonstrated by data analysts. Cross-linking with this skill is the logical next step, and, precisely because of its highly analytical nature, provides the perfect counterpart to design, which is often an intuitive discipline. Both disciplines work extremely well within many companies, but unfortunately they keep this to themselves. Exchange or even collaboration tend to be rare, and unsatisfactory results can often lead to frustration among employees.

At foryouandyourcustomers, we recognise the potential that lies in combining the expertise of designers and data analysts, and are therefore developing a framework that creates a sound link between both skills within a project and company context, in a sustained way that adds value. Of course, not everything can be solved by the process alone: a ‘design to learn’ culture is hugely important. This means that an ostensibly poor test result should not be seen as a failing on the part of the designer, but rather celebrated as knowledge gained.

How does data-driven design look in practice?

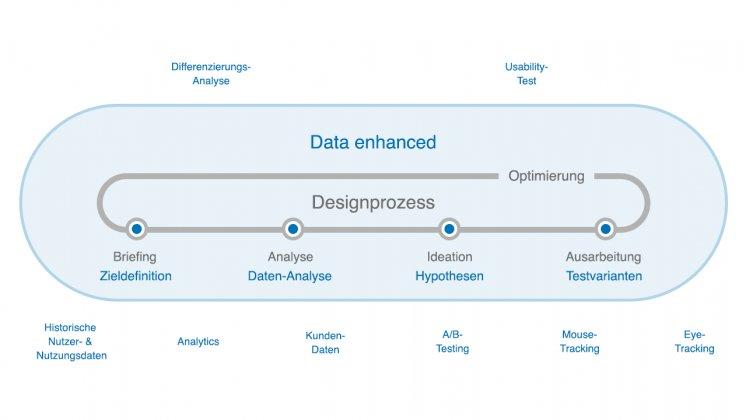

Every single stage of the design process can be enhanced by data analysis, even if only within an advisory capacity. Myriad methods can be used flexibly during the process. However, there is a need for a clear model of collaboration in order to jointly develop metrics, test scenarios and suitable test solutions, resulting in tests and findings that prove to be of lasting value.

- Target definition

As with classic design projects, a data-driven design project also begins with defining a target. This could be something along the lines of ‘We want a higher retention rate’ or ‘We want to improve customer satisfaction’. - Data analysis and problem space definition

Based on the definition of objectives, a data analyst examines the available data in view of the goal. The problem space is defined in conjunction with consultants and designers. They may find, for instance, that the rate of newsletter unsubscribes is very high, which would represent a possible problem space, given the ‘higher retention rate’ objective. - Generating and selecting hypotheses

Within the problem space, we design possible solution hypotheses and compare their suitability for testing, together with a data analyst. For our newsletter example, we would set out hypotheses for areas such as altered newsletter content, more stimulating newsletter design or optimised landing pages. Equally, however, it is also possible to create impetus for the product and marketing team, as design solutions only go some of the way towards achieving the objective. - Test version design and selection

The chosen hypotheses are implemented as different design versions, albeit all with the clear aim of increasing customer loyalty. Findings from previous tests may exclude or influence hypotheses as part of this process. The hypotheses determine the test set-up, which in turn dictates the design of the different versions. As such, an adjustment may affect only one module or touchpoint (such as an explicit call to action in the newsletter), or several touchpoints (such as corresponding approaches in the newsletter and on the landing page). - Test evaluation

We work with data analysts to evaluate the test results in terms of our test hypotheses and analyse whether the test could lead to further insights or test cases.

The right focus, the right method, the right metric

When is it appropriate to use data-driven design?

Data-driven design essentially accompanies the entire product life cycle and ensures that design decisions are made based on actual knowledge. The granularity of the decisions – and thus the test methods and metrics, not to mention the design of the test versions – adapt to the respective phase.

Are usability tests now superfluous?

Qualitative knowledge, e.g. an issues list from user testing, also constitute data and can be used to steer the design process. Qualitative testing is essential in order to solve the biggest usability problems for a given test version and thus increase the level of maturity of the individual design. In order to communicate the ‘why’ to the team and provide input for possible solutions, qualitative methods are simply unavoidable.

Conversely, insights granted by quantitative data (e.g. A/B testing, historical user figures), and especially those with a specific target group, a real-life context and actual devices, can corroborate design decisions in an objective way. Quantitative data reveals its full potential particularly when it comes to strategic directions (e.g. choosing a channel) and highly detailed design decisions (e.g. button colour) – in other words, in areas where the user may not be able to communicate their motivation – as they are independent of personal opinions and allow many different aspects to be examined.

Is the best design the one that’s best at selling?

Data-driven design is all about working together to find the right question, defining objectives and thus channelling creative potential. This also means defining the right metrics and tools of analysis. After all, the solution that achieves the highest results in the short term isn’t necessarily the best one; instead it might be one that lowers the number of returns or increases customer loyalty.

How do things proceed?

Even if your company already has a very high level of data maturity or you have already achieved superb design quality, only in the rarest of cases is the data design process truly end to end and data usage seen holistically. We would be pleased to help you establish appropriate framework conditions and services or elements thereof in order to produce demonstrably performing touchpoints.

Please don’t hesitate to get in touch with us if you want to get to know the Data Analytics and UX Design team.

foryouandyourcustomers GmbH

Augustenstraße 44

70178 Stuttgart

Telefon: +49 160 64 32 099

https://foryouandyourcustomers.com/

Leiter Kommunikation

Telefon: +49 (711) 21952788

E-Mail: haw@foryouandyourcustomers.com

![]()